I’m very fortunate to work for an employer that sends me to conventions to “get smart” and bring it back to apply to our IT systems. Having just concluded my first Aruba Atmosphere conference at The Cosmopolitan Hotel in Las Vegas, I wanted to take a few minutes to summarize my experience and provide feedback to the great people that organized and ran the event.

On-Site Registration

From the first day at on-site registration, I felt very welcome. I had received an e-mail with a bar-code to use at check-in, but I hadn’t yet seen it or printed it, so I simply searched for myself by name in the system on a touch-screen kiosk. They system printed my badge and a member of the event staff gave it to me with a lanyard and directions across the room. At that booth I had my badge scanned and received an Atmosphere 2015 bag with my requested t-shirt size.

Certified Training

Although the conference didn’t officially kick-off until Monday evening’s welcome reception, I arrived early for a 2+ day training class. I attended the WLAN Fundamentals class taught by Kimberly Graves (@kimberlyAgraves) and David Westcott (@davidwestcott), and other offerings included Mobility Fundamentals, Airwave Fundamentals, and ClearPass Fundamentals. These all were in preparation for different Aruba-based certifications, but I was more interested in learning the topic than taking a test. The class was great with sufficient breaks and well-written hands-on labs using remote access to VMware Horizon virtual desktops (referred to in the lab as virtual laptops or VLTs) connected to actual physical wireless NICs.

Welcome Reception

The official kickoff event was in the Tech Playground, the typical trade show floor with vendor booths that event attendees are familiar with. There was also a Tech Playground Theater that held certain presentations by Aruba and their partners who sponsored the show. Behind the assembly of booths was a large area of the ballroom replete with tables of food, such as carving stations with beef and other more international fare. Included with the food were several bars serving beer and soda.

Keynotes

There were two Keynotes held on Tuesday and Wednesday mornings in The Chelsea theater. Tuesday’s event featured Aruba’s President and CEO Dominic Orr with a special virtual visit by HP’s CEO Meg Whitman. We attendees had all received letters slipped under our hotel room doors officially announcing HP's rumored acquisition of Aruba, and Dom spent a fair amount of time trying to set the attendees at ease and give us confidence in Aruba’s future.

Wednesday’s keynote featured Aruba’s CTO and Co-Founder Keerti Melkote and showed off a number of Aruba’s recent technical innovations. The one that stands out in my mind was the Meridian-based Bluetooth Low-Energy (BLE) beacons and the ways they can be used in such industries as Retail, Medical, and Education.

Breakout Sessions

Since I’ve mainly been attending Cisco Live and VMworld the last few years, which are upwards of 20,000 strong, it was refreshing being at a conference with about 2500 people. One benefit was that the sessions were rarely packed and my badge was never scanned at the door. All the sessions I attended were very well presented and, usually included other people from their team such as technical marketing and product management. It was fantastic having access to these people to ask questions and provide direct feedback on their products.

I thought the spacing of time between the sessions was excellent and the lengths of the sessions were just about right. I would suggest the additional training class start Sunday morning instead of afternoon, so the class could be done earlier and we could have more time to attend the other great sessions.

I was hearing WAY too many ringing phones. Truth be told, I even forgot to silence mine and was bitten by it during a session. Please incorporate a standard slide deck that includes a “PLEASE SILENCE YOUR ELECTRONIC DEVICES” so we can show the speaker and other attendees the respect they deserve. Also last slide of the deck could say “remember to fill out your survey."

Meals

During my training sessions, before the official kickoff, meals provided were mainly bagels, cereal, muffins, and fresh fruit for breakfast (pretty good) and boxed meals for lunch (meh). The first day’s lunch was good with plain chips, apple, cookie, and choice of veggie, ham, or turkey (if I recall correctly). The second day’s boxed lunch included the apple and cookie, but the sandwiches were what I would consider fairly exotic and the chips were all choices that I personally didn’t appreciate. Fortunately there are many restaurants in the hotel that are just a short walk away. All meals had great camaraderie with attendees and great conversations.

After the official kick-off, meals were all hot buffet-style and tables were all set with silverware and glasses in advance. The plates were large and allowed us to get all the food we wanted without having to waste time going back for seconds. However, attendees would sometimes waste time looking for a spot at a table that didn’t already have used cutlery. Also, one would have to wait (though not more than a couple minutes) for conference center staff to come by with a pitcher of the meal’s drink selection and fill up your glass.

I suggest Aruba save some money and just provide silverware and napkins as we go through the buffet lines. Then have drink stations scattered around the room for us to choose from. Notably missing from EVERY meal was any selection of soda. This was really unacceptable to a lot of folks who like to have our caffeine but don’t drink coffee. Put some coolers with iced-down cans of soda and let us just grab our own. Even at breakfast. That way we can take a can with us to our first session after breakfast.

Breaks Between Sessions

During my certified training class, there was one break the first day that provided snacks, though they were almost completely gone by the time my class took our break. Also, I believe that break included soda (Diet Coke FTW!) with ice and cups. Most breaks the rest of the conference included a snack of some kind sponsored by one of Aruba's partners at the show (popcorn sponsored by MobileIron, for instance). Unfortunately, I don’t remember a single break the rest of the conference that offered soda. Repeated requests for this on Twitter apparently fell on deaf ears, so I purchased some myself from a small casino shop downstairs for a premium. Even some people I talked to that drink coffee (I don’t) said they were getting tired of it and would have enjoyed soda for a change.

Social Media

The display of social media was great around the conference center in the form of LCD TVs displaying tweets that used the show’s #ATM15 hashtag. I saw regularly released announcements on Twitter reminding us of certain events (e.g., “Don’t miss out on the most important meal of the day” with a fun graphic showing where breakfast was). I also commend the coordination shown by the social media team early in the week with folks on Twitter, particularly Monday morning when some folks couldn’t get on the wireless. Perhaps more people could be dedicated to “manning the Twitter account” for the week to be more responsive to needs and requests of attendees.

Another way to engage with social media is to take advantage of “influencers” that blog, are active on social media, and folks active on the Airheads Community forum. As I’ve seen at other conferences, I suggest tables with plenty of power plugs either at the keynote or in a separate “hang space” where the keynotes get live-streamed to where influencers can take notes, write blogs, and engage in social media outlets like Twitter. The marketing value of these influencers can’t be underestimated. Check out the podcast called “Geek Whisperers” for more ideas on this.

Tech Playground

Many Aruba partners in attendance had technical displays and smart people manning their booths to answer questions. In addition, there was a great variety of Aruba booths set up to showcase their latest technology. One challenge I had was in finding someone to answer a question about Aruba’s VIA remote-access VPN system. I happened to find an employee that talked with me about it, and it turns out this particular employee was quite involved in the setup of the Tech Playground.

While all the Aruba booths did a good job showing the latest tech, I suggest there be someplace set aside, either a set of booths or a bunch of large whiteboards, manned by TAC folks, technical marketing engineers, or some other experts in all the currently available and supported products. That way, there would be no doubt where someone like me should go to ask a question. Ideas for a name might be “Ask The Expert” or “Technical Solutions Clinic.”

Another idea, to help “newbies” like me get better acquainted with all the Aruba gear, the hardware displays could show all past and present gear produced by Aruba, a kind of “Aruba Archive” of sorts. The conference organizers could add onto this every year along with tags showing year and month introduced, date of last support, and some technical facts about each controller, AP, and physical appliance.

Atmosphere 2015 App

As with all great conferences, this one had a mobile app. Available on Android and iOS, it featured an interactive map using BLE beacons placed around the conference center to show accurate indoor location and turn-by-turn directions. The app also included “My Agenda” which is a must for those of us that sign up for classes and forget months later what we signed up for. :-) The information included in the app was extremely useful, albeit somewhat disorganized. I recommend streamlining it to allow one-touch access to My Agenda and make the Full Agenda more mobile-friendly. Kudos on the successful integration of Meridian technology and being able to showcase that for us! It was fun to look around and find some of the beacons and where they were hiding, and it was great getting popups such as “Welcome To Atmosphere 2015” when I first entered the lobby of the hotel.

One idea for the app would be to permit opt-in location tracking to let other attendees find where we are. This would facilitate impromptu meetings, though it should be opt-in to prevent a potential privacy concerns (a.k.a. "creepiness factor”).

The app included the ability to fill out surveys for each session, which was very convenient! However, asking us to rate the session and speaker isn’t relevant for meals. Please make sure the survey questions are relevant for the type of session. If we check into a room for a session by either being scanned or by location tracking of our phone, a database can then tell if we’ve attended and only ask us to answer survey questions for the sessions we’ve attended. There’s no need to ask me to rate a session that I didn’t attend, and if I attended one that wasn’t on my schedule to begin with, I should be prompted to answer survey questions for THAT session instead.

I also recommend turning the map to the vertical rather than horizontal, as that would more efficiently use screen space on the user’s mobile device.

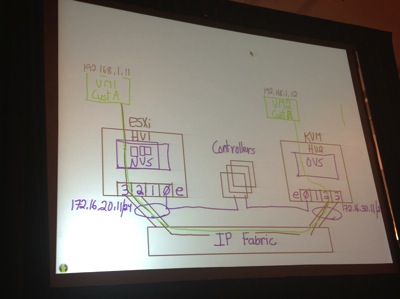

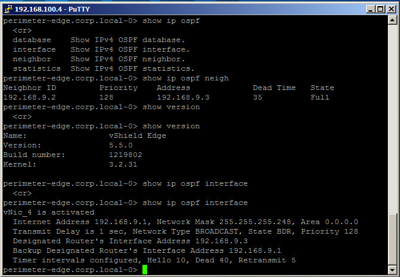

Atmosphere 2015 Network

I had the opportunity to attend the “Lessons Learned” session presented by the team that built and ran the show network and was enlightened by some of the lessons they learned. My compliments to all involved on providing us a solid wireless experience—I would expect nothing less from Aruba Networks! That being said, there are some improvements that can be made for next year. I understand some may be harder to do than others, but I just wanted to provide some “brainstorm ideas”:

- Provide IPv6 (I believe this was mentioned as being planned for next year)

- Don’t use NAT/PAT - Get a block of IPs from the service provider, both IPv4 and IPv6, just for the show. IPv6 especially was designed to eliminate the need for NAT, and I personally know people that have a seething hatred for NAT. My dislike for it isn’t quite that strong, but NAT is commonly (and incorrectly) assumed to be and used for a security mechanism. It’s not.

- Ensure upstream redundancy - maybe it was there but the presentation didn’t go into that level of detail on the wired network

- Provide read-only access to AirWave and ClearPass used for the show. This provides 100% transparency for what is going on and would be a HUGE selling point and learning experience for all attendees. An alternative to this would be to set up a NOC of some kind with outward facing screens that provide read-only access for us to take turns on and click around to learn more.

- Use the network overall to showcase products and technologies made by Aruba and Aruba’s partners. Perhaps physical security cameras attached to the show network with an HP storage array back-end? Aruba isn’t just about wireless anymore—prove it to us with this unique opportunity.

General

I had several positive comments on Twitter based on my sharing of the conference events. I live-tweeted the two keynotes as well as the lessons-learned session I attended. People genuinely want to participate and learn, even those that aren’t able to attend in person. Aruba could take advantage of this by providing live-streaming of key sessions, such as the keynotes and more popular breakouts, to virtual attendees for a cost lower than the on-site conference. This would of course require a fair amount of coordination and work, but I can see huge benefits to Aruba’s brand and it’s ability to cast it’s message far and wide. Virtual attendance combined with an increased stress on influencers in social media could be a major boon for Aruba, it’s partners, and increasing customer base.

The Firing Line was a panel of Aruba corporate leadership that listen as attendees step up to the microphone and ask any question they want. I understand this is the traditional close of the conference and I think it’s fantastic. It really adds transparency. I recommend this be live-streamed to virtual attendees, and it would be great if there was a live Twitter chat or some other online channel where virtual attendees can ask questions even though they’re not on-site.

The Tone

I was frankly shocked witnessing the open and verbal hostility towards Cisco at this conference. I’ve been a longtime Cisco customer and I love how Aruba systems like AirWave and ClearPass can interoperate with Cisco gear as well as kit from other vendors. In the interest of full disclosure, I participate in the Cisco Champion program and I use many of their products. No vendor has ever sold me something by putting down their competitor. In fact, when I hear a vendor doing that, it turns me off to considering them at all. You’ve got great equipment, and great people. Some Aruba employees used to work for Cisco, and I talked to several Aruba folks that also didn’t like the hostile tone struck during this conference. When I heard Meg Whitman say “We’re going to beat Cisco” I saw that as totally inappropriate for this audience. It may be appropriate to say internally in a company, and maybe even to your partners. But definitely not to customers who still happily use “the enemy’s” products. You’re classier than that Aruba. Don’t give your competitor any of your airtime (pun intended). Just sell me on the merits of your stuff. I’m smart enough to see the benefits, and that’s why I use your gear.

Conclusion

Overall, I found the conference to be very informative, enjoyable, and beneficial. I’m taking a gigaton of useful information and ideas to improve our systems and workflows back home, and I made new friendships and kindled old ones that will continue to benefit me throughout the year on Twitter. Many thanks to those that planned and ran the event. And thanks to Aruba employees, partners, and ultra-smart customers for making me smarter. I would certainly enjoy attending Atmosphere 2016 if I’m able to.

Got questions or comments? Hit me up on Twitter (@swackhap) or drop a comment below.